Letta's next phase

Every closed foundation lab today is moving towards developing not just models, but also agents: the harness around models that enables computer use, memory, skills, subagents, and failure recovery.

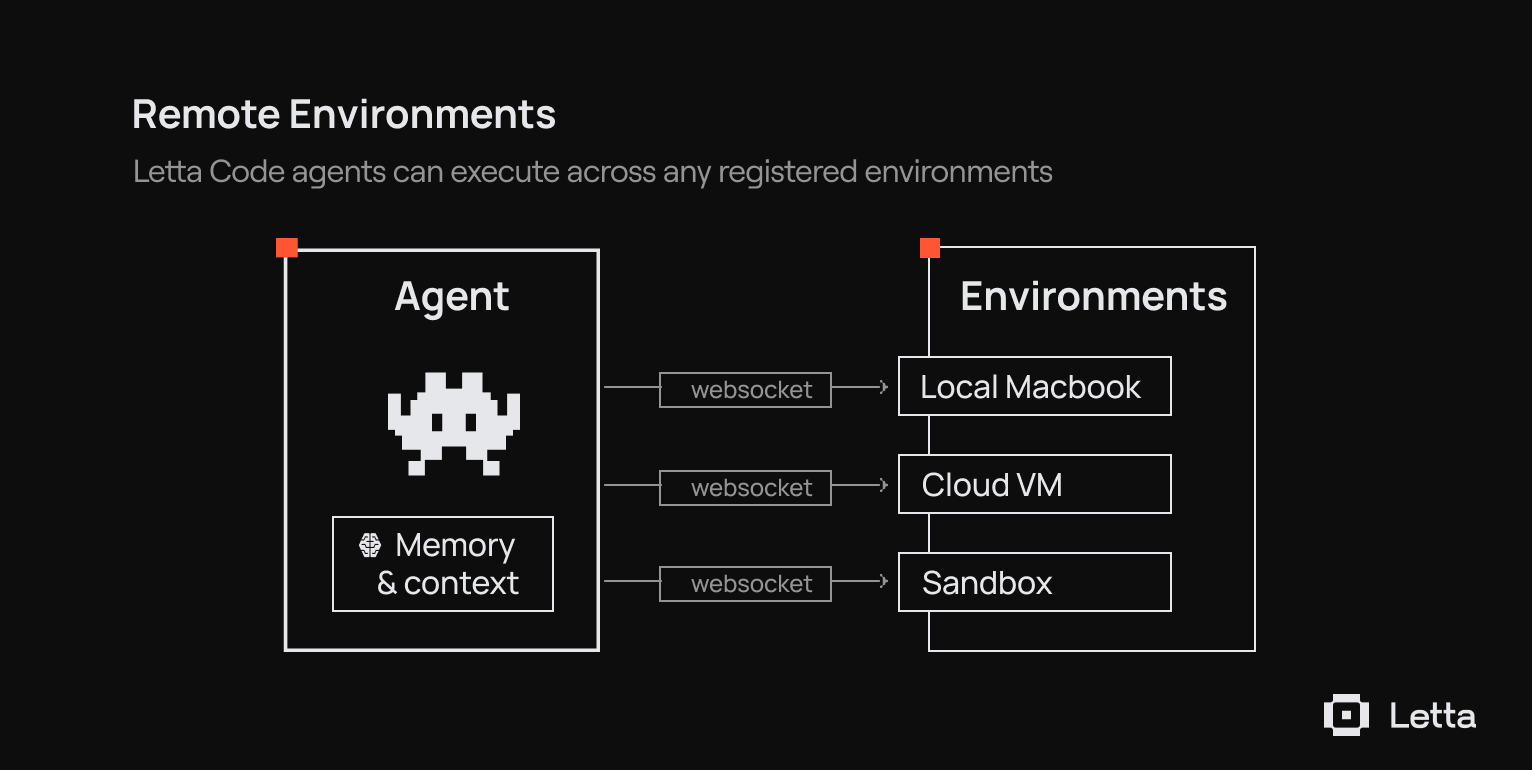

The most powerful harnesses today leverage computer use, injecting agents into environments where they are free to execute scripts and modify state. Computer use has been an inflection point that has enabled significantly more general-purpose and powerful agents.

Letta has always been about memory and personalization. We made a bet at the start of this company that memory was the key to self-improving artificial intelligence, and we are now applying that focus to the next generation of agent systems. With Letta Code, we are building memory systems into an open, model-agnostic agent harness.

Letta Code is our flagship

Letta Code is a model-agnostic agent harness with persistent memory. It gives underlying language models frontier "agent" capabilities - computer use, skills, subagents, transparent memory systems, and deployment paths - that are not tied to a single lab or model provider.

The closed labs are all moving in the same direction. OpenAI is building computer use directly into its Responses API. Anthropic has Claude Code and the Agent SDK. Google has Gemini CLI and a handful of other agent API/SDK products.

We think the world needs a different option: Letta Code and the Letta Code SDK as an open, extensible runtime that works with every model. You own the memory. You choose the model. You can see how the system works, down to the exact input and output tokens of the LLM itself.

What gets better

Letta is focusing on the primitives that allow anyone to deploy stateful agents with real memory that isn't locked down to a single provider.

Memory moves from specialized memory tools that edit memory in a database to generalized computer use tools like bash that operate over memory projected into git-backed files (context repositories) - aka "MemFS".

Sleep-time compute gets more powerful. We pioneered the idea that agents should reflect, consolidate, and improve automatically. Now those workflows move client-side, where they can use the same computer, tools, and context.

Agent orchestration moves from pure server-side messaging to dynamic subagents and skills. Work can now be delegated, executed, and composed across agents.

Skills become the primary way to package and reuse agent capabilities. They are composable, efficient, and better aligned with how agents learn over time.

The Letta Code SDK makes these systems deployable at scale. The core API exposes the fundamental primitives around the agent. The SDK allows developers to build on an open agent harness that not only affords frontier coding and computer-use performance, but also is memory-first: designed to build agents that actually learn and grow over time, and whose lifespans far exceed that of any underlying model.

What we are leaving behind

Many of our older features were built for a world where computer use was not the primary mechanism for agent action. They served their purpose - our original server-side agent pattern was designed to support a limited scope of what agents could do. Agent capabilities have grown far beyond our original design, and we are refocusing Letta around supporting and expanding those capabilities.

We are sunsetting a set of server-side features in favor of stronger client-side and runtime-native replacements:

- Letta Filesystem becomes actual filesystem access, context repositories, and computer use.

- Legacy server memory tools like core_memory_replace will be removed in favor of straightforward filesystem operations on git-backed context repositories.

- Templates will be replaced by versioned Letta Code SDK and community tooling like lettactl.

- Identities move to the application layer using tags.

- Server-side MCP integrations give way to client-side skills.

- Server-side sleep-time agents will be replaced by a client-side subagent system.

- Hardcoded server-side multi-agent tools give way to subagents, dynamic agent-discovery skills that build on general-purpose API patterns.

- Tool rules (which constrain agent actions based on hard-coded rulesets) are deprecated to avoid inhibiting frontier capabilities.

Transition path

We are focusing Letta on the execution model that best supports frontier agents. This shift gives agents more capability, more transparency, and more room to improve over time. We will make the transition practical with migration guides, office hours, direct support in Discord, Ezra, and clear timelines for every immediate and phased change.

Users should expect deprecation of some features like tool rules and legacy tools immediately, while more major features like templates and filesystem will be deprecated by mid-April.

If you have questions about these changes or how they affect your systems, we want to hear from you.

The mission

Letta builds agents that learn. Agents with persistent memory, real computer access, and the infrastructure to improve from their own lived experience and work. Letta Code is the runtime that brings these together: git-backed memory, skills, subagents, and deployment that works across every model provider.

.png)